Agent Harness: The Infrastructure Layer That Makes AI Actually Work

Table of Contents

1. The AI Reliability Problem

You've seen the demos. An AI agent writes code, browses the web, makes decisions — all autonomously. It looks magical. Then you try to run it in production for a real task with 50+ steps, and it quietly goes off the rails.

This is the reliability gap. Models are getting smarter, but smart alone doesn't mean reliable. Benchmarks measure one-shot performance. Real production tasks are multi-step, long-running, and full of edge cases.

Think of it this way: A Formula 1 engine is incredible. But without a chassis, steering wheel, brakes, and tires, it doesn't go anywhere useful. The engine is the model. Everything else is the harness.

The question developers need to answer in 2026 isn't "which model is best?" It's "how do we wrap models so they work reliably?" That's what an agent harness solves.

2. What Is an Agent Harness?

An agent harness is the complete infrastructure that wraps around an AI model to manage long-running tasks. It is not the model itself. It is everything else the model needs to work reliably in the real world.

Agent = Model + Harness

The model generates responses. The harness handles everything else: memory between sessions, which tools the model can access, guardrails that prevent catastrophic failures, the feedback loops that help it self-correct, and the observability layer that lets humans monitor what's happening.

If you've used Claude Code, you've experienced a harness. What makes it powerful isn't Claude alone — it's the harness around Claude: context management, filesystem controls, tool orchestration, session persistence, and the permission model that keeps it safe.

harness/

├── context/ # memory, session state, compaction

├── tools/ # what the agent can do

├── guardrails/ # what the agent must not do

├── planner/ # how tasks are broken down

├── evaluator/ # checking output quality

└── lifecycle/ # start, handoff, end of sessions

3. Harness vs. Orchestrator — What's the Difference?

This trips up a lot of developers. The terms sound similar but they operate at different layers.

| Orchestrator | Harness | |

|---|---|---|

| Concern | Logic and control flow | Capabilities and infrastructure |

| Does what | Decides what to do next | Gives the model its tools |

| Manages | Task sequencing, routing | Memory, context, side-effects |

| Enforces | Reasoning loop (ReAct, etc.) | Guardrails, permissions |

| Analogy | The brain of the operation | The hands and infrastructure |

They work together. The orchestrator says "invoke the model with this prompt." The harness ensures when the model is invoked, it has the right tools, context, and environment. You need both. Improving either one dramatically improves real-world performance.

4. The 5 Core Components of a Good Harness

Production-grade harnesses are built around five key responsibilities. Neglect any one of them, and reliability breaks down.

Component 1: Human-in-the-loop controls

Agents must pause at high-stakes decisions. Deleting a database, charging a credit card, sending emails to customers — these need human approval. A harness defines exactly where those checkpoints are and blocks execution until a human confirms.

Component 2: Context and memory management

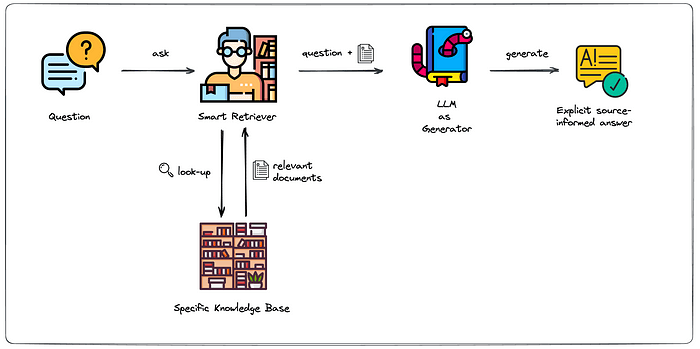

LLMs have no memory between sessions by default. A harness solves this with context compaction, session handoff artifacts, and dynamic retrieval (RAG). Anthropic's harness maintains a claude-progress.txt log so long tasks can resume where they left off.

Component 3: Tool call orchestration

Bad orchestration creates infinite loops and cascading failures. Good harnesses define which tools are available, when to use them, the correct order, and how to handle errors gracefully. Vercel famously removed 80% of their agent's tools and got better results — fewer choices, fewer mistakes.

Component 4: Sub-agent coordination

Complex tasks need specialized agents. One researches, another writes, a third reviews. The harness manages communication between them, merges their outputs, and resolves conflicts.

Component 5: Prompt preset management

Different tasks need different instructions. A harness stores, versions, and selects the right system prompt for each task type — rather than pasting the same monolithic prompt everywhere.

5. Advanced Pattern: Persistent Memory

By default, every time you start a new session with an LLM, it has no idea who you are, what you worked on yesterday, or what bugs you fixed last week. The model is stateless. Persistent memory is the harness layer that fixes this.

This isn't about stuffing old conversations into the context window — that's expensive and hits limits fast. It's about selectively storing, indexing, and retrieving the right memories at the right time.

The three layers of memory

L1 — In-context memory (ephemeral) What's currently in the context window. Fast, but lost when the session ends. The harness manages what lives here via compaction — summarizing older turns, dropping irrelevant tool outputs, keeping only what the model needs right now.

L2 — External memory store (session-persistent) A vector database (Pinecone, pgvector, Chroma) or key-value store that survives session boundaries. The harness writes summaries, decisions, and facts here — and retrieves them via semantic search when starting a new session.

L3 — Structured state (long-term) A progress file or structured JSON document the harness maintains across days. Anthropic's Claude Code harness uses a claude-progress.txt for exactly this — a human-readable, agent-writable log of what has been done, what is pending, and what decisions were made.

How it works end-to-end

Session start: Harness queries L2/L3 for relevant memories → injects them into the system prompt → agent starts informed, not blank.

Session end: Harness extracts key facts, decisions, and unfinished tasks → compresses them → writes to L2/L3 → next session picks up exactly here.

Tradeoffs

| Advantages | Risks |

|---|---|

| Agent builds team-wide context over time | Stale memories can mislead the agent |

| No repeated re-explanation across sessions | Retrieval quality depends on embedding model |

| Faster task start — agent arrives informed | Privacy: memories may contain sensitive code |

| Survives model swaps — memory is external | Storage grows unboundedly without pruning |

| Human-readable audit trail of decisions | Debugging retrieval failures is non-trivial |

Critical implementation note: Never inject all memories — inject only what's relevant to the current task. Keep retrieved memory injections under ~500 tokens. Use tags and metadata filters aggressively.

6. Advanced Pattern: Bug Knowledge Base

Every developer has lived this: you spend three hours debugging a cryptic error, find the fix, close the ticket — and six months later a colleague hits the exact same bug and spends three hours on it too. The knowledge died with the PR comment.

A bug knowledge base is a harness component that captures bug-fix pairs at the moment of resolution and makes them retrievable — by the agent, for any future developer, automatically. This turns individual debugging effort into compounding team intelligence.

The data model

BugEntry fields:

bug_id string Linked GitHub issue or Jira ticket ID

error_signature string Canonical error message or symptom description

root_cause string Why the bug occurred — human or agent-authored

fix_summary string What was changed and why, in plain language

diff string Actual code diff (sanitized of secrets)

affected_files string[] Files involved — for scoped retrieval

tags string[] e.g. ["auth", "race-condition", "postgres"]

resolved_by string Developer or agent — for attribution

The four capture points

Capture 1 — Merged PR (automatic) When a PR labelled bug-fix merges into main, a GitHub Actions webhook fires. An extraction agent reads the diff and PR description, structures it into a BugEntry, and writes it to the KB. Zero manual effort after the label is applied.

Capture 2 — Agent runtime error (automatic) While an agent executes a task and hits an exception, the harness error hook intercepts it before the agent attempts a fix. It queries the KB for similar past bugs and injects the top matches into context.

Capture 3 — Manual developer submission (on-demand) For bugs fixed outside normal PRs — hotfixes, config changes, infrastructure bugs, tribal knowledge — a developer submits directly via a CLI script or internal tool.

Capture 4 — Post-fix agent write-back (automatic feedback loop) After the debugging agent resolves an issue, the harness writes the new bug-fix pair back to the KB. Every new fix the agent makes enriches the KB for the next run.

Choosing your storage backend — four tiers

The vector DB is just one option. Start at Tier 1 and migrate only when you feel the limitations. These tiers are additive — Markdown is always the source of truth.

Tier 1 — Markdown files in the repo ✅ Recommended to start

One .md file per bug inside a .bugs/ directory. Git-native, versioned, PR-reviewable, human-editable. Agent retrieves via grep.

When to use: Small teams (<10 devs), <300 bugs, or just getting started. Zero friction.

<!-- .bugs/BUG-2026-042.md -->

## Error

TypeError: Cannot read properties of undefined (reading 'token')

at AuthMiddleware.verify (src/auth/middleware.ts:34)

## Root cause

JWT refresh ran before user session was hydrated.

Race condition between session.init() and token.verify().

## Fix

Awaited session.init() before token.verify() in middleware.

Added guard: if (!session.ready) throw new SessionNotReady().

## Files changed

src/auth/middleware.ts, src/session/index.ts

## Tags

auth, race-condition, jwt, async

## Resolved by

@priya — 2026-03-18 — BUG-2026-042

# Harness retrieval — grep across .bugs/

import subprocess, pathlib

def retrieve_md(error: str, bugs_dir=".bugs") -> str:

keywords = error.split()[:6]

hits = set()

for kw in keywords:

out = subprocess.run(

["grep", "-rl", kw, bugs_dir],

capture_output=True, text=True

).stdout.strip()

hits.update(out.splitlines())

docs = [pathlib.Path(p).read_text() for p in list(hits)[:3]]

return "\n\n---\n\n".join(docs)

| Advantages | Limitations |

|---|---|

| Lives in repo — versioned in Git | Keyword retrieval only — no semantic |

| PRs review the KB alongside code | Slow at scale (500+ files) |

| Devs read and edit directly | Misses paraphrase matches |

| Zero infra — works offline | Duplicate detection is manual |

Tier 2 — Markdown source + SQLite FTS5 index ✅ Recommended at scale

Markdown stays the human-readable source of truth. A SQLite FTS5 database provides fast full-text search. Index is rebuilt by CI when .bugs/*.md files change. No external services.

When to use: 300–2000 bugs, or when grep is getting slow.

# index_bugs.py — run in CI on .bugs/ changes

import sqlite3, glob, pathlib

conn = sqlite3.connect("bugs.db")

conn.execute("""

CREATE VIRTUAL TABLE IF NOT EXISTS bugs USING fts5(

bug_id, error_signature, root_cause, fix_summary, tags

)""")

for path in glob.glob(".bugs/*.md"):

text = pathlib.Path(path).read_text()

sections = parse_md_sections(text)

conn.execute(

"INSERT OR REPLACE INTO bugs VALUES (?,?,?,?,?)",

(pathlib.Path(path).stem,

sections["Error"], sections["Root cause"],

sections["Fix"], sections["Tags"])

)

conn.commit()

# Retrieval — FTS5 ranked full-text search

def retrieve_fts(query: str):

rows = conn.execute(

"SELECT * FROM bugs WHERE bugs MATCH ? "

"ORDER BY rank LIMIT 3", (query,)

).fetchall()

return rows

| Advantages | Limitations |

|---|---|

| Markdown stays editable and readable | Index must rebuild when files change |

| SQLite is a single local file — no server | Still keyword-based — not semantic |

| FTS5 is very fast at 10,000+ entries | Two things to keep in sync |

| No embedding model or API needed | Misses paraphrase matches |

Tier 3 — JSONL flat file (Agent-heavy teams)

One JSON object per line, append-only. Best as the agent write target, with markdown as the human read layer.

When to use: Agents are writing frequently, or you want zero-overhead append writes.

# Retrieval by tag filter

def retrieve_jsonl(query_tags: list, path="bugs.jsonl"):

results = []

with open(path) as f:

for line in f:

bug = json.loads(line)

if any(t in bug["tags"] for t in query_tags):

results.append(bug)

return results[:3]

# Write — agent appends after fixing a bug

def store_jsonl(entry: dict, path="bugs.jsonl"):

with open(path, "a") as f:

f.write(json.dumps(entry) + "\n")

Tier 4 — Vector database (1000+ bugs, semantic search)

Embeddings-based similarity search. Finds bugs even when wording differs. Add this on top of markdown, never instead of it.

When to use: 1000+ bugs, or when keyword search misses too many relevant matches.

import chromadb

from sentence_transformers import SentenceTransformer

encoder = SentenceTransformer("all-MiniLM-L6-v2")

db = chromadb.PersistentClient(path="./bug_kb")

bugs = db.get_or_create_collection("bug_entries")

def store_vector(entry: dict):

text = entry["error_signature"] + " " + entry["root_cause"]

embedding = encoder.encode(text).tolist()

bugs.add(

ids=[entry["bug_id"]],

embeddings=[embedding],

documents=[json.dumps(entry)],

metadatas=[{"tags": json.dumps(entry["tags"])}]

)

def retrieve_vector(error: str, k=3) -> list:

embedding = encoder.encode(error).tolist()

results = bugs.query(query_embeddings=[embedding], n_results=k)

return [json.loads(d) for d in results["documents"][0]]

Decision guide

| Situation | Recommended approach |

|---|---|

| Greenfield, any size team | Start with Markdown files |

| Grep getting slow (>300 bugs) | Add SQLite FTS5 index |

| Agent writing frequently | JSONL as write target + Markdown for humans |

| Semantic misses (>1000 bugs) | Add Vector DB on top of Markdown |

| Migrating tiers later | Markdown is always the source of truth |

Don't start with a vector DB. The bottleneck on a young codebase is knowledge capture, not retrieval speed. A

.bugs/folder with 50 markdown files and a grep retriever will outperform an over-engineered vector store day one.

7. Real-World Examples from Production

Anthropic — Claude Code: the three-agent harness

Anthropic uses a multi-agent harness for long-running coding tasks. One agent plans, one generates code, and one evaluates quality. Context resets are paired with structured handoff artifacts — so the next agent starts from a known, clean state. This solved the classic problem of context drift over multi-hour sessions.

Manus: 5 harness rewrites, same model, 5× better reliability

Manus rewrote their harness architecture five times in six months. The underlying model didn't change. Each rewrite improved task completion rates purely through better structure: smarter context handling, tighter tool definitions, and cleaner sub-agent coordination. The model was never the bottleneck.

Microsoft — Azure SRE Agent: 40.5 hours to 3 minutes

Microsoft's SRE agent harness wires MCP tools, telemetry, code repos, and incident management into a single pipeline. "Intent Met" score rose from 45% to 75% on novel incidents after shifting from bespoke tooling to a file-based context system. The system has handled 35,000+ production incidents autonomously.

Vercel: subtraction as harness improvement

Vercel's team removed 80% of their agent's available tools. The result: fewer steps, fewer tokens, faster responses, and higher task success rate. Right-sizing the toolset is a harness decision, not a model decision.

8. Harness Engineering as a Discipline

Harness engineering is now a standalone discipline — distinct from MLOps and DevOps, though it borrows from both.

MLOps — model performance over time (training, deployment, retraining)

DevOps — software delivery pipelines (CI/CD, infrastructure)

Harness engineering — agent behavior in real-time execution, right now, on this task

Key tools as of early 2026:

Claude Agent SDK → general-purpose harness, built-in context mgmt

CrewAI Flows → event-driven multi-agent orchestration

LangChain → composable harness primitives

AutoHarness → automated harness engineering (6-step governance)

AutoAgent → meta-agent that writes and optimizes its own harness

Emerging role: "Harness engineer" is entering job descriptions at companies building agent-powered products. The skillset combines software engineering with AI-specific knowledge of context management, prompt design, and agent evaluation.

9. Why the Harness Is the Moat, Not the Model

The model is becoming a commodity. Claude, GPT, Gemini — on static benchmarks, the gap is shrinking fast. The real differentiation is now infrastructure.

| Metric | Result |

|---|---|

| Manus harness rewrites, same model | 5× reliability improvement |

| Tools Vercel removed to improve reliability | 80% |

| Microsoft SRE "Intent Met" score improvement | +30% |

| Benchmark swing from harness setup alone | 5+ points |

All of these came from changing the harness — not the model. You can fine-tune a competitive model in weeks. Building production-ready harnesses takes months or years. That's the moat.

10. The Future: Self-Optimizing Harnesses

AutoAgent (April 2026) lets a meta-agent build and iterate on a harness autonomously overnight — modifying the system prompt, tools, and orchestration, running benchmarks, and keeping only changes that improve scores. In a 24-hour run, it hit #1 on SpreadsheetBench (96.5%) and top score on TerminalBench (55.1%) — beating every hand-engineered entry.

The human's job shifted from "engineer who edits agent.py" to "director who writes program.md."

Looking ahead: harnesses will become the primary tool for solving model drift — detecting exactly when a model stops reasoning correctly after its 100th step, feeding that data back into training. We're heading toward a convergence of training and inference environments, and the harness is at the center of that shift.

11. Conclusion

2025 proved agents could work. 2026 is about making them work reliably at scale. The model is a component. The harness is the system.

Constrain what agents can do. Inform them about what they should do. Verify their work. Correct their mistakes. Keep humans in the loop at high-stakes decisions. This is harness engineering — and it's the most important infrastructure skill for developers building AI products right now.

The engine matters. But the car is what wins races.

[Thoughts by Anish, rephrased by Claude]

Tags: agent-harness · AI infrastructure · LLM · harness-engineering · claude-code · multi-agent · developer